How often do you hear someone say they’re an SEO expert?

Quite often?

But do these technical SEOs really know about SEO?

I always believed most SEOs lack basic knowledge of Technical SEO. Hence, I decided to conduct a poll where I asked a very basic question on SEO that I believe every SEO must know.

Below was the question

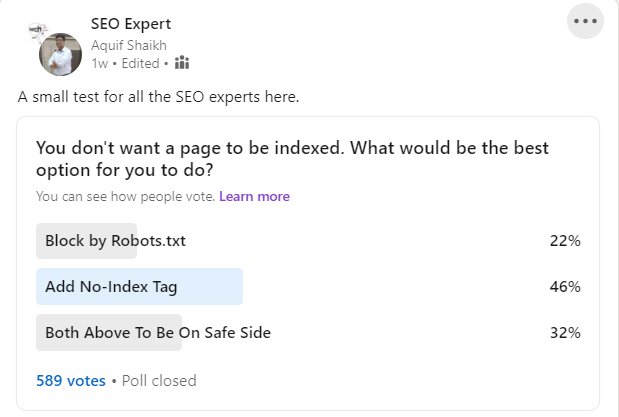

Q. You don't want a page to be indexed. What would be the best option for you to do?

And below were the options for the poll

- Block by Robots.txt

- Add No-Index Tag

- Both Above To Be On Safe Side

Pretty straight and simple, right?

But guess what?

54% SEOs got this answer wrong. And the sample size wasn't too small either. A total of 573 participants voted for this poll.

As you can see 22% SEOs think Robots.txt is the best option to make sure a page is not indexed and 32% SEOs think using a combination of blocking through robots.txt and using a no-index tag would be a great idea.

However, only 46% of the SEOs got the answer right that you should use the No-Index Tag ONLY.

Why Did Most Users Get The Answer Wrong?

Let us analyze what made those SEOs choose those answers and why it is the wrong method to stop Google from indexing pages.

As you all know, Robots.txt is a file that acts as a directive to all the bots including Search Engine Bots, and tells them how the website should be crawled, which pages should be crawled, and which shouldn't be crawled.

Most Search Engine Bots including Googlebot strictly follow the rules in the robots.txt file and stop crawling the website as soon as they see that the page is being blocked by robots.txt.

The obvious logic says, since Googlebot won't be crawling the website, they won't be indexing it.

This was the reason some people picked the robots.txt option in the poll.

The Catch

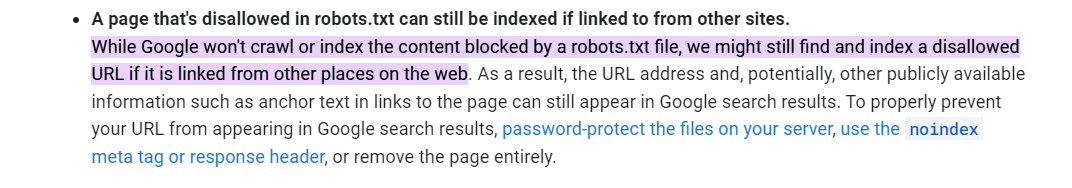

However, there is a catch. As per Google, they will respect the guidelines in the robots.txt file and thus not index the page. However, if the page appears anywhere else on the internet, Google will still index the page.

Below is the specific guideline from Google

You can find the guideline at the below link

https://developers.google.com/search/docs/advanced/robots/

Watch out for the bolded words below

While Google won't crawl or index the content blocked by a robots.txt file, we might still find and index a disallowed URL if it is linked from other places on the web. As a result, the URL address and, potentially, other publicly available information such as anchor text in links to the page can still appear in Google search results. To properly prevent your URL from appearing in Google search results, password-protect the files on your server, use the noindex meta tag or response header, or remove the page entirely.

So blocking a web page using robots.txt is not a great idea.

IMPORTANT NOTE

Please note that prior to July 2019, it was possible to add a no-index command in robots.txt itself. However, this option was discontinued by Google in July 2019.

But Why Not Use A Combination Of Both?

Now, the next question arises, why is the third option i.e Blocking through robots.txt apart from using a no-index tag a wrong answer.

The answer here is simple. When a page appears elsewhere on the internet, Google will try to crawl the page. But the very first thing that Google will do is look up the Robots.txt file.

Now, as soon as it sees that the page is being blocked by Robots.txt, it will stop the crawl, and hence it will not see the no-index tag. Thus it will still end up indexing the page as in the case of no no-index tag.

Why Is This Important To Know?

Such aspects of Technical SEO are very important for all the SEOs to know as they can affect how your website is indexed. However, it is strange how most SEOs get that wrong.

So, the next time someone asks you this question in an interview or otherwise, answer it with confidence that you should use the no-index tag.

Other Ways To Make Sure A Page Is Not Indexed

The other way to make sure the page is not indexed is to either password-protect it or send a 404 (Content Not Found) or 410 (Content Deleted) error.

What if You Accidentally Got Your Page Indexed?

If you accidentally got a page indexed, you can 404 or 410 it or mark it as no-index and then either wait for Google to crawl the URL or use the Google URL removal tool to quickly take down the URL

What's your view on this poll? Share with us in the comments